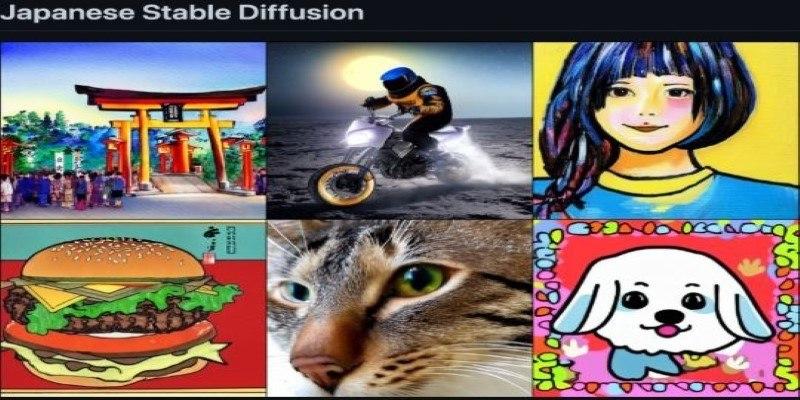

Stable Diffusion has brought fresh ways to create images using AI. While much of the focus has been on global or Western use, Japan has quietly shaped its version of this tool. Japanese Stable Diffusion isn't just about language; it reflects a distinct creative approach. It blends Japan's art, storytelling traditions, and anime culture with detailed, fine-tuned AI models.

These models are designed to work in Japanese, capture emotional expression, and generate highly stylized visuals. It's a more focused and thoughtful use of technology shaped by the preferences of artists, developers, and fans in Japan.

What Makes Japanese Stable Diffusion Unique?

Japanese developers and creators have taken Stable Diffusion in a more specialized direction. While many global models aim for realism or abstract art, Japanese versions lean into anime and manga aesthetics. These aren't surface-level tweaks. Models are fine-tuned with large datasets drawn from fan art, visual novels, and game design references. The training often follows community standards or ethical sourcing, reflecting respect for the original content.

One of the biggest advantages is native-language support. Japanese Stable Diffusion can interpret prompts written in Japanese with greater accuracy, avoiding the awkward results that come from translating Japanese ideas into English first. The grammar, tone, and nuance of the language are better preserved, which improves the final image output.

Character design and emotional tone are treated with care. In anime, even small changes in the eyes or posture can change a character’s whole mood. These models are trained to notice and recreate those subtleties. This level of control makes them especially useful for storytellers and visual artists who want specific emotional cues in their work.

Tools and Platforms Behind the Movement

Many of the most effective Japanese Stable Diffusion models are hosted on open-source platforms. Models like "Anything," "AbyssOrangeMix," and "Counterfeit" are popular, particularly for anime-style outputs. These are not broad-use models. They are built to deliver a certain aesthetic and are trained on tightly curated datasets to achieve that goal.

Tagging systems play a key role. Instead of simple text prompts, Japanese creators often use detailed tags—such as those on image boards like Danbooru—to describe poses, backgrounds, facial expressions, and more. These tags give users more control and consistency in what the AI generates. It’s a different workflow than natural language prompting but allows for much finer detail.

While tools like Automatic1111 and ComfyUI are used worldwide, Japanese developers customize them with scripts or add-ons to support Japanese text, user interfaces, and even voice inputs. These customizations help non-technical users participate. Mobile apps like “AI Picasso” and “Mochi Diffusion” also bring these features to phones, making the technology easier to use and more accessible in everyday settings.

There's also a strong community element. Developers share updates, fix bugs, and offer support through forums and GitHub repositories. The work is highly collaborative, often based on shared values rather than commercial goals.

The Cultural Layer: Why Japan Shapes This Tech Differently?

Japan's influence on Stable Diffusion goes beyond software. The country's long-standing attention to aesthetic details shapes how AI is developed and used. Anime, for instance, isn't just a style—it’s a storytelling method built on visuals. Japanese Stable Diffusion responds to this by producing images that are more expressive, with cleaner lines, balanced compositions, and mood-driven elements.

Fan culture plays a big part, too. Many artists in Japan create "doujin"—fan-made art based on games, anime, or manga. Stable Diffusion is being used by these creators not to replace drawing but to explore concepts, visualize characters, and build ideas. It acts as a drafting tool, helping artists shape a direction before going into manual work.

Ethics are taken seriously. Some developers filter out copyrighted material from their training data or set clear boundaries around character likeness and usage. This approach comes from Japan’s cultural respect for original creators. Even when models are open-source, there’s an effort to keep them aligned with shared expectations around fairness.

Language again plays a strong role. Tools and prompts in Japanese allow users to describe their ideas more naturally. The process becomes more fluid and intuitive, especially for artists who don't work in English. It's about creating your voice without needing to translate your ideas into a different structure.

Future Trends and Global Influence

Japanese Stable Diffusion is already having an impact beyond Japan. Artists around the world are downloading and using these anime-trained models for character work, illustrations, and fan creations. Many prefer the more controlled, tag-based prompts because they reduce randomness and provide clearer direction.

There’s a growing trend of merging Stable Diffusion with other AI tools. Japanese developers are working on systems that generate a character’s appearance, voice, and personality as one package. These systems are already being used in indie games, animation, and VTuber content. It’s a full-stack creative toolset that starts with AI images and builds out from there.

At the same time, the community-driven, methodical pace of Japanese development sets a different tone. It shows that AI doesn’t always need to move fast or be disruptive. It can evolve through attention to detail, shared goals, and thoughtful use. This measured approach is appealing to many who want control and quality rather than constant updates.

Global developers are watching. The techniques developed in Japan—such as better tagging systems, character consistency, and nuanced expressions—are being adopted elsewhere. As more creators explore this space, Japanese Stable Diffusion will likely influence how visual AI tools are designed and used around the world.

Conclusion

Japanese Stable Diffusion stands out not just for what it creates but for how it approaches creation. It's a more intentional version of image generation, shaped by Japan's artistic values, language structure, and fan culture. By fine-tuning models for anime-style output, building better tools for Japanese users, and promoting responsible use, this version of Stable Diffusion offers something different—more specialized, more expressive, and more in sync with its users. As the global demand for character-focused, stylistic AI art grows, Japanese models and methods will continue to play a leading role—quietly, carefully, and with a distinct point of view.